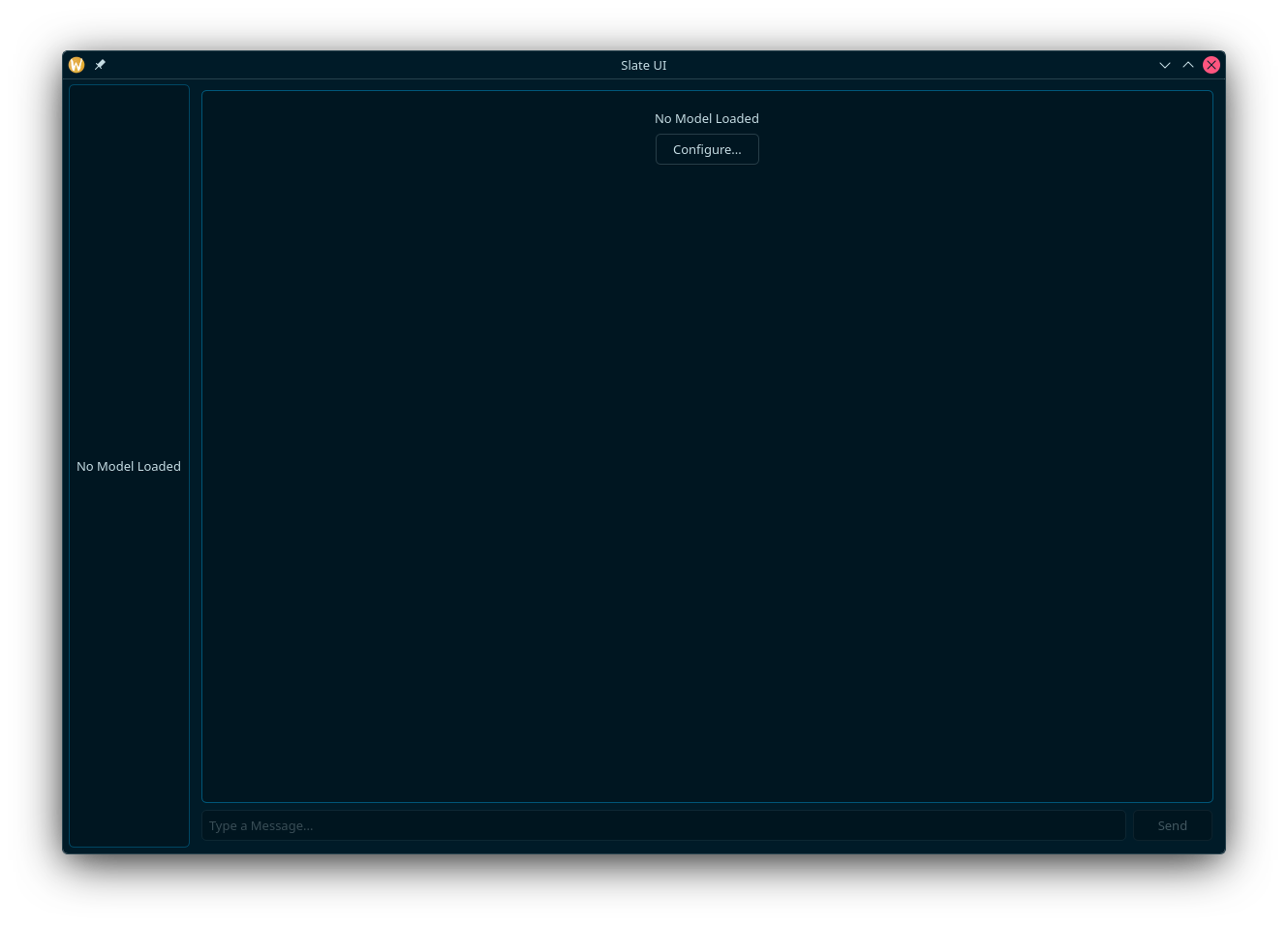

Slate UI

In progressSlate UI is a native Qt desktop application for easy interaction with locally hosted large language models. Built with modern C++ and Qt6, it provides a clean, efficient offline interface for working with local LLMs.

What it is

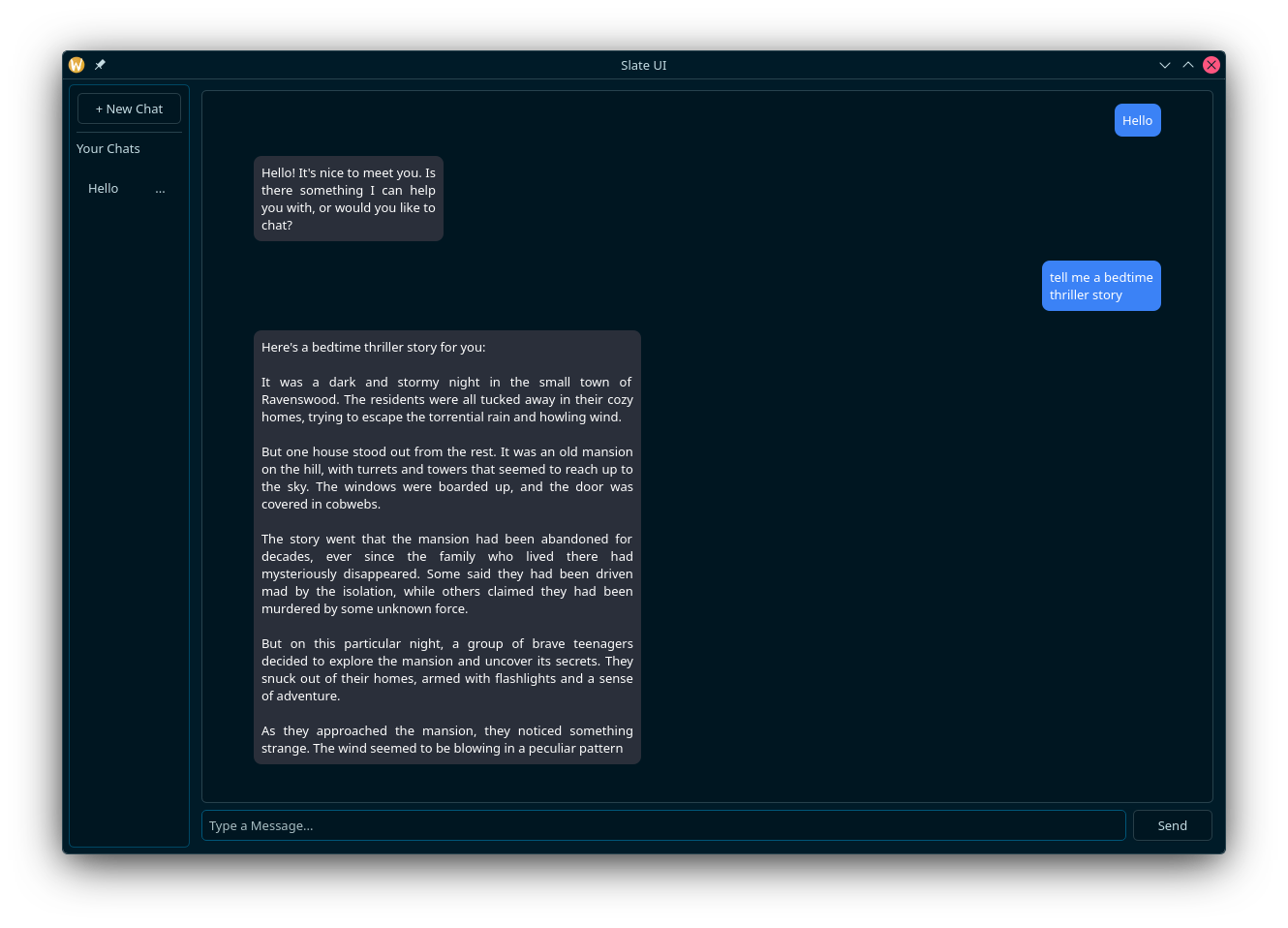

Slate UI is a desktop chat interface for interacting with locally hosted large language models using Qt6 for the GUI and `llama.cpp` / `ggml` for local inference. It supports configurable model presets, a chat-bubble-style conversation view, and runs entirely offline with no external API dependencies.

Features

- Clean chat-bubble UI for conversing with local LLMs

- Local model inference via `llama.cpp` / `ggml` (GGUF model format)

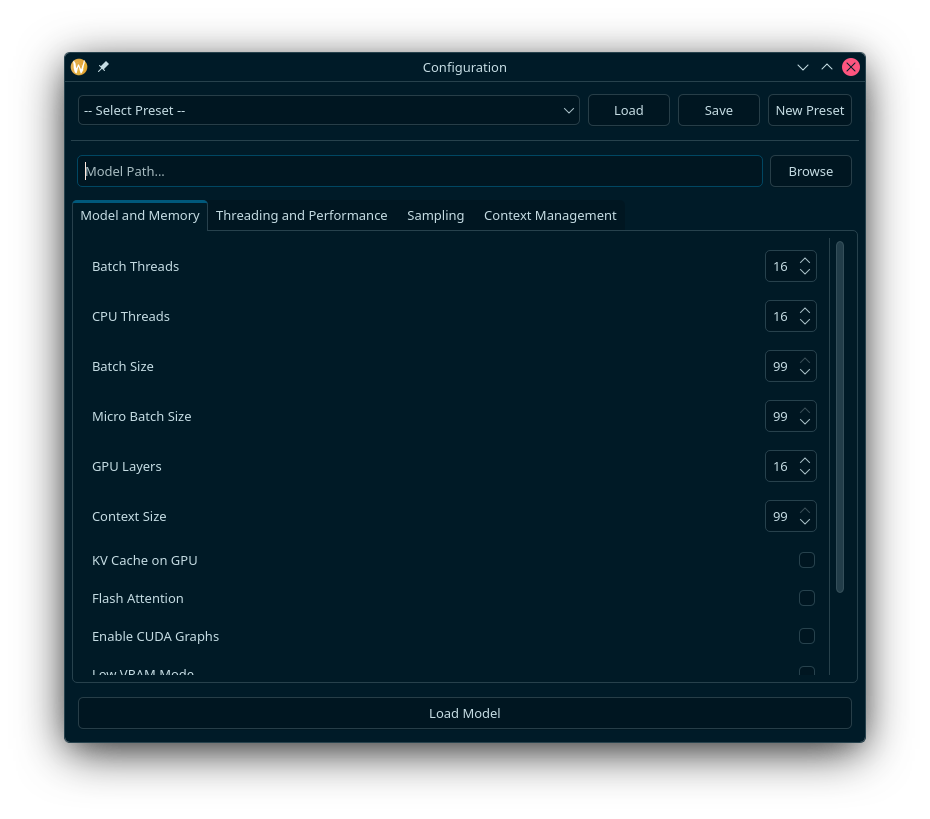

- Configurable model presets (temperature, context size, etc.)

- Configuration window for model and inference settings

- Cross-platform support (Linux, macOS, Windows)

Planned

- Local data storage and configuration files

- Multiple accounts support

- Vision model integration

- Code execution and syntax highlighting

- Browser tool usage integration

- Agentic mode

- Local web GUI server

- Headless usage with API for other applications

- VSCode extension

- Firefox extension

Technologies

- C++17

- CMake (build system)

- vcpkg (dependency management)

- Qt6 (GUI framework)

- llama.cpp / ggml / gguf (local model runtimes)